After an initial hype on Capsule Networks in 2017, interest declined a bit and everyone was back to train bigger models or stripped models for mobile needs. However, preserving topology makes neural networks much smarter by using less training data. For this example, we’ll use a CapsNet implementation by XifengGuo that uses TF/Keras and see how it performs on KMNIST and K49.

KMNIST

This is straight forward. We have to change the load function in capsulenet.py from

def load_mnist():

# the data, shuffled and split between train and test sets

from keras.datasets import mnist

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train = x_train.reshape(-1, 28, 28, 1).astype('float32') / 255.

x_test = x_test.reshape(-1, 28, 28, 1).astype('float32') / 255.

y_train = to_categorical(y_train.astype('float32'))

y_test = to_categorical(y_test.astype('float32'))

return (x_train, y_train), (x_test, y_test)

to

def load_mnist():

# the data, shuffled and split between train and test sets

x_train = np.load("./Kuzushiji-MNIST/data/kmnist-train-imgs.npz")['arr_0']

x_test = np.load("./Kuzushiji-MNIST/data/kmnist-test-imgs.npz")['arr_0']

y_train = np.load("./Kuzushiji-MNIST/data/kmnist-train-labels.npz")['arr_0']

y_test = np.load("./Kuzushiji-MNIST/data/kmnist-test-labels.npz")['arr_0']

x_train = x_train.reshape(-1, 28, 28, 1).astype('float32') / 255.

x_test = x_test.reshape(-1, 28, 28, 1).astype('float32') / 255.

y_train = to_categorical(y_train.astype('float32'))

y_test = to_categorical(y_test.astype('float32'))

return (x_train, y_train), (x_test, y_test)

and we can run the basic CapsNet on this dataset.

This is no proper train-valid-test split!! However, it is enough to get a first impression of CapsNets on KMNIST.

I question the need of converting y_train/test to float32 before doing the one-hot-encoding.

Namespace(batch_size=100, debug=False, digit=5, epochs=50, lam_recon=0.392, lr=0.001, lr_decay=0.9, routings=3, save_dir='./result', shift_fraction=0.1, testing=False, weights=None)

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_1 (InputLayer) (None, 28, 28, 1) 0

__________________________________________________________________________________________________

conv1 (Conv2D) (None, 20, 20, 256) 20992 input_1[0][0]

__________________________________________________________________________________________________

primarycap_conv2d (Conv2D) (None, 6, 6, 256) 5308672 conv1[0][0]

__________________________________________________________________________________________________

primarycap_reshape (Reshape) (None, 1152, 8) 0 primarycap_conv2d[0][0]

__________________________________________________________________________________________________

primarycap_squash (Lambda) (None, 1152, 8) 0 primarycap_reshape[0][0]

__________________________________________________________________________________________________

digitcaps (CapsuleLayer) (None, 10, 16) 1474560 primarycap_squash[0][0]

__________________________________________________________________________________________________

input_2 (InputLayer) (None, 10) 0

__________________________________________________________________________________________________

mask_1 (Mask) (None, 160) 0 digitcaps[0][0]

input_2[0][0]

__________________________________________________________________________________________________

capsnet (Length) (None, 10) 0 digitcaps[0][0]

__________________________________________________________________________________________________

decoder (Sequential) (None, 28, 28, 1) 1411344 mask_1[0][0]

==================================================================================================

Total params: 8,215,568

Trainable params: 8,215,568

Non-trainable params: 0

...

Epoch 50

...

600/600 [==============================] - 531s 885ms/step - loss: 0.0435 - capsnet_loss: 0.0249 - decoder_loss: 0.0475 - capsnet_acc: 0.9783 - val_loss: 0.0762 - val_capsnet_loss: 0.0509 - val_decoder_loss: 0.0645 - val_capsnet_acc: 0.9472

We can see that KMNIST is much more challenging to all ML algorithms than MNIST. While it should take ~100-120 sec/Epoch to train this standard CapsNet example on MNIST, it takes ~ 8-9 min/Epoch on KMNIST.

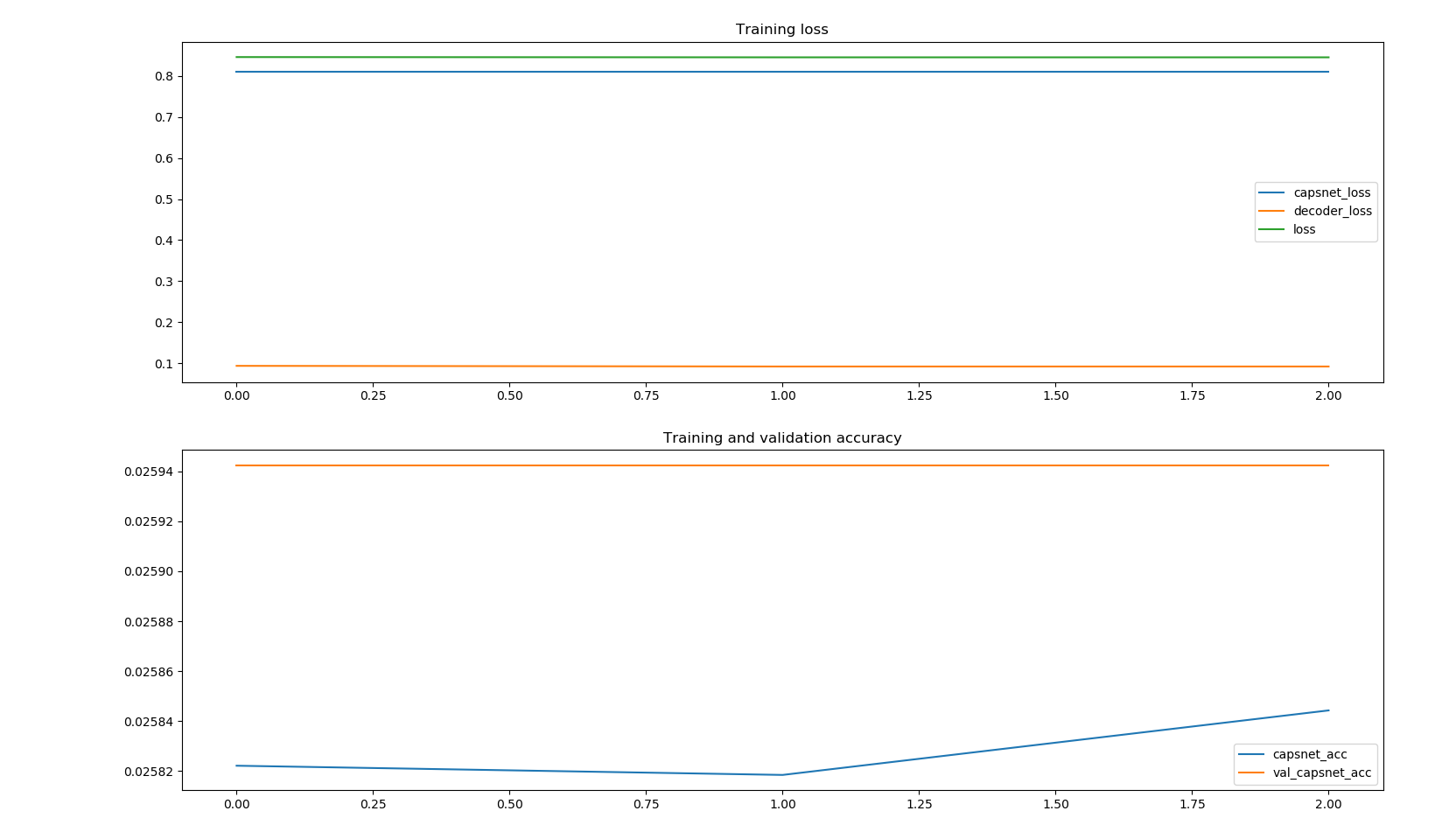

K-49

Let’s keep it short. Running the CapsNet default structure on the K-49 datasets leads to training times of 2-2.5 h/epoch and it seems like it would require quite a lot of computation to build a useful model.

I am going to run this for 50 Epochs as well but only after improving the training process to reduce costs.